やること

単純な分類問題。誤差の減り具合をプロットして、オーバーフィッテングしないように、バッチサイズも徐々に狭めていく。

各ステップ詳細

データをロード、多項式特徴量を追加

# load data data_train = pd.read_csv('./training_data.csv') data_tornm = pd.read_csv('./test_data.csv') X, y = np.array(data_train.ix[:,:-1]), np.array(data_train.ix[:,-1]) # extend data from sklearn.preprocessing import PolynomialFeatures X_p = PolynomialFeatures(2).fit_transform(X)

モデルを定義

# create the model model = Sequential() model.add(Dense(128, input_dim=X_p.shape[1], activation='relu')) model.add(Dense(16, activation='linear')) model.add(Dense(1, activation='sigmoid')) model.compile(optimizer='rmsprop', loss='binary_crossentropy', metrics=['accuracy'])

今回は2クラス分類だから最終出力は1。

コスト関数の減少具合をプロット

class LossHistory(Callback): def on_train_begin(self, logs={}): self.losses = [] def on_batch_end(self, batch, logs={}): self.losses.append(logs.get('loss')) losshist = LossHistory()

定義したモデルの訓練

print(model.summary()) model.fit(X_train, y_train, nb_epoch=5, batch_size=500, verbose=2, callbacks=[losshist]) scores = model.evaluate(X_test, y_test, verbose=2)

実行すると

____________________________________________________________________________________________________ Layer (type) Output Shape Param # Connected to ==================================================================================================== dense_9 (Dense) (None, 128) 32512 dense_input_4[0][0] ____________________________________________________________________________________________________ dense_10 (Dense) (None, 16) 2064 dense_9[0][0] ____________________________________________________________________________________________________ dense_11 (Dense) (None, 1) 17 dense_10[0][0] ====================================================================================================

のような出力がされる。モデルに含まれるパラメータを確認しながら、解きたい問題に対して複雑すぎ、単純すぎないかを確認する。

ループごとに精度改善を確かめながらバッチサイズを減らす

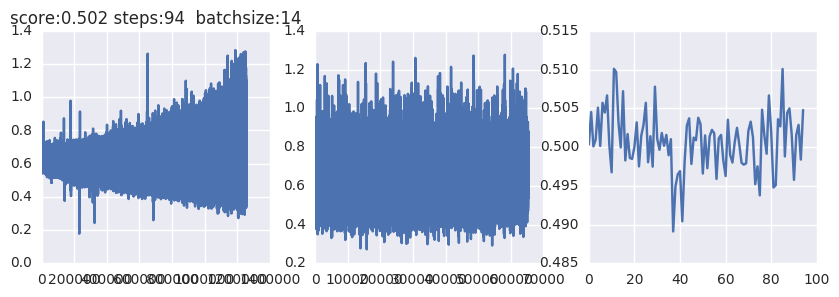

LOOP = 100 losses, rocaucs = [], [] for i in range(LOOP): # train batch_size = 200*(LOOP-i+1)/LOOP model.fit(X_train, y_train, nb_epoch=10, batch_size=batch_size, verbose=2, callbacks=[losshist]) scores = model.evaluate(X_test, y_test, verbose=2) # roc auc pred = model.predict(X_test) rocaucs += [roc_auc_score(y_test, pred)] print 'logloss:', log_loss(y_test, pred), 'rocauc', roc_auc_score(y_test, pred) # losshist losses += list(losshist.losses) plt.figure(figsize=(10, 3)) plt.subplot(131) plt.plot(losses) plt.title('score:'+str(scores[1])[:5]+' steps:'+str(i)\ +' batchsize:'+str(batch_size)[:5]) plt.subplot(132) plt.plot(losshist.losses) plt.subplot(133) plt.plot(rocaucs) plt.show()

かなりごり押しだけどバッチサイズを200から徐々に小さくしながら

誤差を確認していく。 左から順に、これまでの全ステップでの損失関数の出力、最近10エポックでの損失関数の出力、ROCAUCの推移。この場合は完全にデタラメな予測をしてしまっているので(ROCAUC= 0.5近くのまま)前処理から考え直す必要がありそう。

コード

from keras.models import Sequential from keras.layers import Dense from keras.preprocessing import sequence import tensorflow as tf import pandas as pd import numpy as np import matplotlib.pyplot as plt;plt.style.use('ggplot') from sklearn.metrics import roc_auc_score from sklearn.model_selection import train_test_split from keras.layers import Dropout from keras.callbacks import Callback from sklearn.metrics import log_loss %matplotlib inline data_train = pd.read_csv('./_training_data.csv') data_test = pd.read_csv('./test_data.csv') X, y = np.array(data_train.ix[:,:-1]), np.array(data_train.ix[:,-1]) # extend data from sklearn.preprocessing import PolynomialFeatures X_p = PolynomialFeatures(2).fit_transform(X) # callback class LossHistory(Callback): def on_train_begin(self, logs={}): self.losses = [] def on_batch_end(self, batch, logs={}): self.losses.append(logs.get('loss')) # split data X_train, X_test, y_train, y_test = train_test_split(X_p, y, test_size=0.05, random_state=42) # create the model model = Sequential() model.add(Dense(128, input_dim=X_p.shape[1], activation='relu')) model.add(Dense(16, activation='linear')) model.add(Dense(1, activation='sigmoid')) model.compile(optimizer='rmsprop', loss='binary_crossentropy', metrics=['accuracy']) print(model.summary()) losshist = LossHistory() model.fit(X_train, y_train, nb_epoch=5, batch_size=500, verbose=2, callbacks=[losshist]) scores = model.evaluate(X_test, y_test, verbose=2) LOOP = 100 EPOCH = 10 losses, rocaucs = [], [] for i in range(LOOP): # train # 少しずつバッチサイズを小さくしていく batch_size = 200*(LOOP-i+1)/LOOP model.fit(X_train, y_train, nb_epoch=EPOCH, batch_size=batch_size, verbose=2, callbacks=[losshist]) scores = model.evaluate(X_test, y_test, verbose=2) # roc auc pred = model.predict(X_test) rocaucs += [roc_auc_score(y_test, pred)] print 'logloss:', log_loss(y_test, pred), 'rocauc', roc_auc_score(y_test, pred) # losshist losses += list(losshist.losses) plt.figure(figsize=(10, 3)) plt.subplot(131) plt.plot(losses) plt.subplot(132) plt.plot(losshist.losses) plt.subplot(133) plt.plot(rocaucs) plt.show()